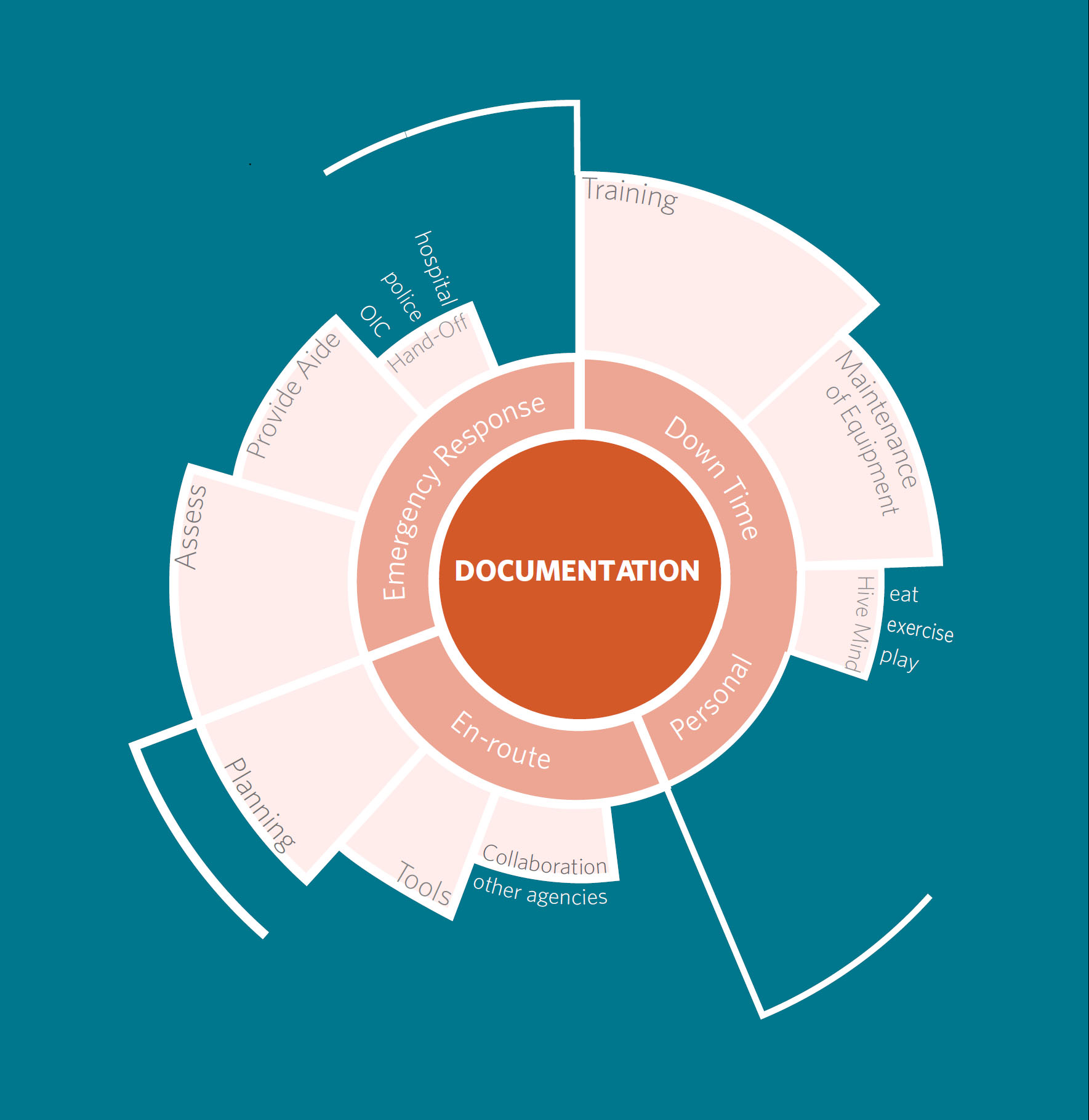

Three insights in particular went on to inform our design direction and intervention.

Documentation is a necessary evil

“The people who put the information into the database are the people who went out... they’ve gotten beat up in the elements for how many hours in the cold and wet and now they’re expected to come back and make a thorough report of what they’ve done”

Quality documentation creates a sense of order

“Garbage in, garbage out. If the reports are badly written, they get thrown out”

“So all of that is tracked so on a more macro scale we can see those trends... this is the average response time”

The chain of command limits responsibility and decreases stress

Responders are one part of a larger system. Every type of first responder relies on other teams to get the job done. Every participant mentioned the process of handing a case off to another team or working closely with another branch of government responders to respond or accurately assess an emergency situation.

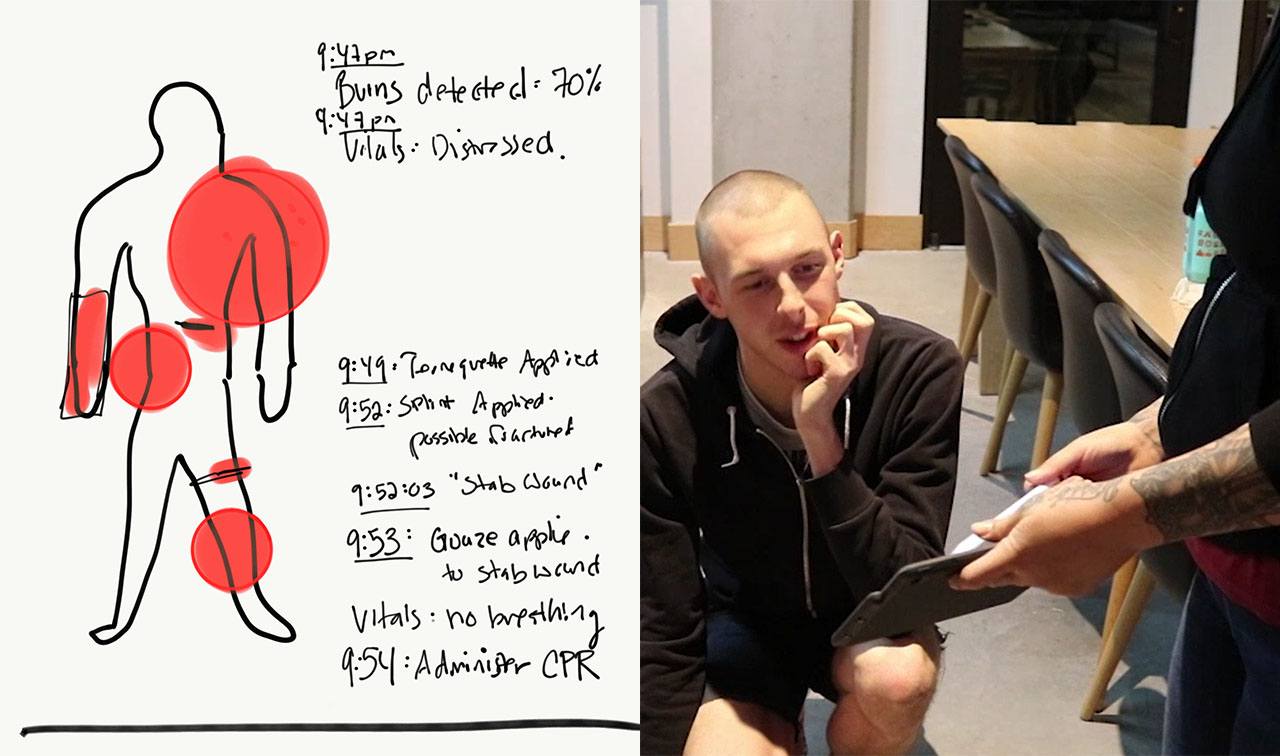

There’s a clear need to update documentation systems. Documentation goes through multiple hands using different systems. Nothing about the documentation is universal, but each system overlaps others, leading to redundancy and duplicated work. The quality of these tools can be low, with one responder describing the Sun Pro system as “30 years out of date, 30 years ago.” Faulty tools can also lead to confusion, such as two devices reading conflicting vitals. These issues must be resolved by hand for each call.